Multimodal Affective Computing

2021–2027

Affective Computing

Signal Processing

Virtual Reality

Multimodal Learning

Overview

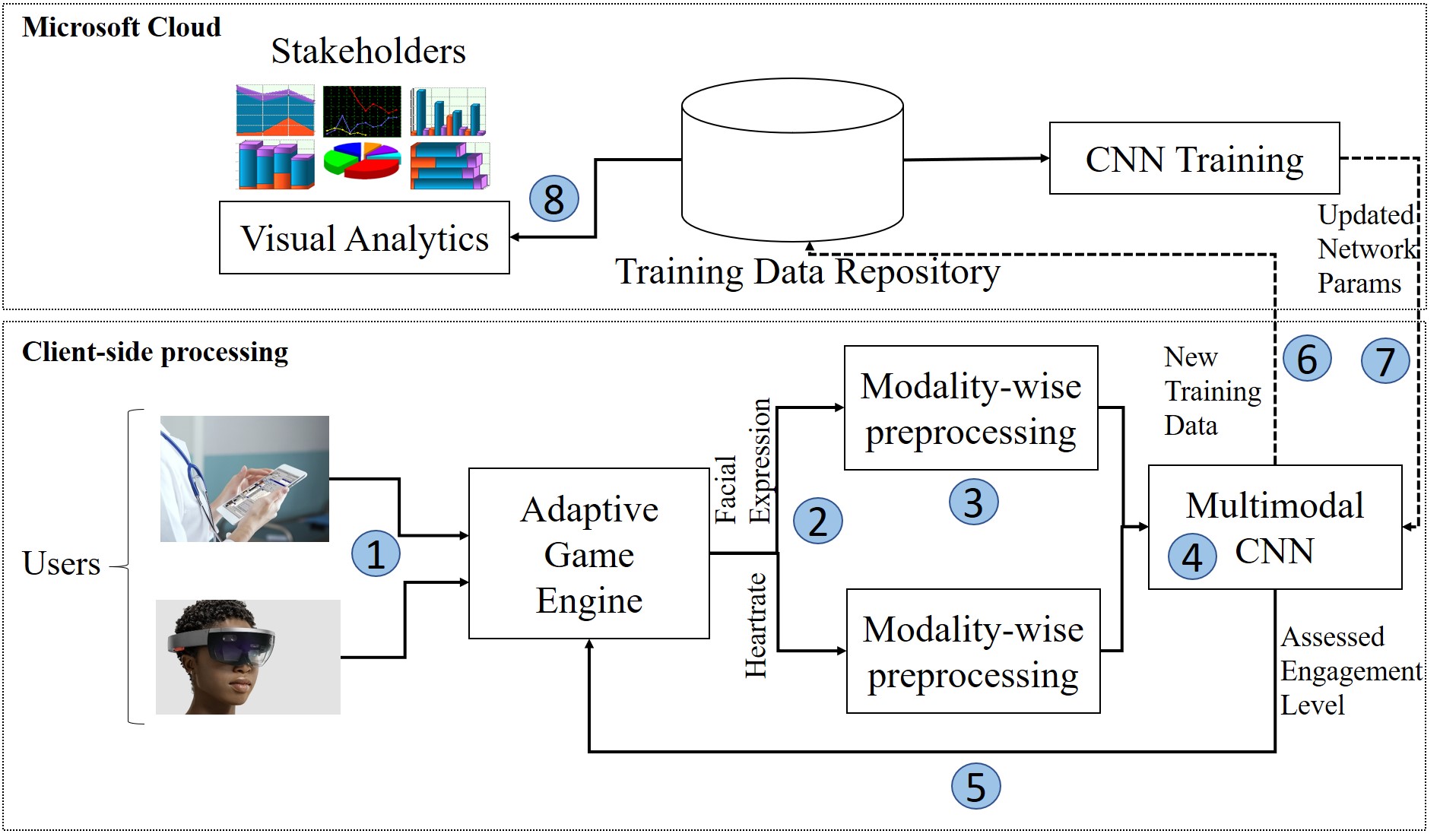

Collaborating with Shaftesbury (now Wellwave Inc.), we will develop a machine learning model for mutlimodal assessment of stress. The multimodal sensors can be physiological (e.g. EEG, heart-rate) and behavioural (e.g. facial expressions). The target is to use the assessed stress for Shaftesbury’s Positive Distraction Entertainment System which adapts game content dynamically to reduce stress in children before a complex medical procedure, which can reduce complexity and recovery time.

Funding

- NSERC Collaborative Research and Development Grant (CRD) - $450K

- NSERC Alliance + Mitacs Accelerate Grant - $488K

- New Frontiers in Research Fund - Exploration - $250K